Johncfii

Solar Enthusiast

- Joined

- Nov 24, 2020

- Messages

- 265

This is a modification to my solar charge controllers, for charging Lithium batteries, that I haven’t seen mentioned elsewhere. My SCC’s are Outback Solar FM80’s, but in reading the manuals for other similar charge controllers, such as the Victron “SmartSolar”, the Schneider “Context”, and the Morningstar “Tristar”, it appears that this modification might apply equally well to other brands of similar SCC.

This modification is just a bit complex to explain, so this is a rather long post.

I have a DIY 42 kWH lithium battery bank that is working wonderfully well for us. It is a lot nicer to rely on than was our old AGM lead-acid bank.

I know that some forum users here consider it “Pollyanna-ish” to want to limit the state of charge of our lithium batteries to something like between 15% to 85% of full capacity. But going the DIY-route, building a large lithium pack from low-cost 280-AH cells direct from China, allowed me to install much “extra” battery capacity, which means not needing to always draw 100% out of the batteries. Yet, I still have the extra capacity available if a long cloudy period is expected. So, I figure, if it might get us an extra two or three years use out of these batteries, why not limit the stress on cells?

The problem is, with my Outback FlexMax charge controllers, how do you reliably control charging to terminate at something like 85% of full charge? Like Victron, and Schneider, and others, the Outback FM80’s are designed to terminate charge based upon some combination of time in ABSORB mode, or decreasing tail current (which Outback calls Charged Return Amps), or a combination of measured battery voltage, time in ABSORB, and declining charge current.

As an aside, it happens that I don’t ever directly rely on my BMS to interrupt charge or discharge, and I don’t pass charging current or discharge current through my BMS. I could not bear the aesthetics of inserting these “little” BMS boards (even the ones rated at 120 amps) in line with my carefully chosen, lovely and expensive, large-gauge welding cables that connect my battery bank. Instead, I use the alarm outputs of my much-less-expensive 30-amp JBD BMS’s, via control relays, to stop charging or discharging as appropriate, if BMS alarm conditions are ever tripped.

One often mentioned way that these charge controllers can be setup to charge lithium, to something less than 100% charge, is to carefully select a lower ABSORB mode voltage, and pick a tail current that terminates charge in the neighborhood of 85%. Then you set a “pseudo-FLOAT” voltage that keeps the SCC supplying current to satisfy your load, while allowing some small current to flow in or out if the battery bank. Of course, we don’t normally FLOAT lithium batteries, but we can use this slightly lower “FLOAT” stage voltage setting to keep the SCC working to satisfy your load without further charging the battery bank.

After weeks and weeks of trying to find ABSORB Voltage/Time/Tail Current/FLOAT voltage settings to optimize charging to about 85% of full charge, I found that to be an exercise in frustration. It was easy to find a combination of settings that would give good results when the batteries were being charged with no load on the system. And it was easy to find a different combination of settings that would give good charging results, while at the same time drawing a moderate load on the system. And it was easy to find a still different combination of settings that would give results if charging the batteries while drawing a heavy load from the system. But it was impossible, at least for me, to find a single combination of settings that gave good results under the full range of sun and load conditions. Since I have to travel for work for two weeks at a time, I needed a wife-proof scheme that would allow her to operate the system with minimal intervention.

The modification that I’m now using is possible because these charge controllers have an external temperature sensor input, intended to adjust lead-acid battery charging voltages, depending on battery temperature. Because we don’t use temperature compensation for lithium batteries, this temperature sensor input is available to utilize in a different way.

The temperature sensor, which the manufacturers call either an RTS (Remote Temperature Sensor) or an RTD (Remote Temperature Detector) are simply metallic elements whose electrical resistance changes with temperature. For the Outback SCC, both the ABSORB stage, and FLOAT stage voltages are calibrated for room temperature. But if an RTS is connected to the system, then for every degree centigrade lower/higher than room temperature that is detected by the RTS, the stage voltages are increased/decreased by .06 volts (for a 24-volt system) up to a user-programmable limit.

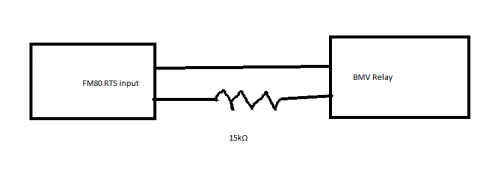

But instead of using the actual RTS, a fixed-value resistor can be switched in circuit, across the RTS input terminals by a relay, to fool the solar charge controller into temporarily increasing or decreasing charge stage voltage. By experimentation, I found that switching a 15k ohm resistor across the RTS input terminals, I can increase the stage charge voltage by my pre-programmed 0.4 volts limit.

For utilizing this feature to help control charging of a lithium battery, I use it in conjunction with a coulomb-counting SOC meter having a programmable relay output. The SOC meter that I chose is the Victron BMV712, which I’ve found to be quite accurate, needing recalibration very infrequently once Peukert exponent, and Battery Charge Efficiency factors, were optimized by trial and error. My Victron BMV712 relay output is wired so that relay contacts are in series with both a 15k ohm resistor, and the RTS input terminals. The Victron relay contacts are programmed to open when my desired upper-limit SOC is reached.

For example, my ABSORB stage voltage is set in the FM80 to 27.1 volts. FLOAT stage voltage is set to 26.8 volts. Below my desired SOC charge termination setting, the 15k ohm resistor input tells the FM80 to increase ABSORB and FLOAT voltages to 27.5 and 27.2 volts respectively. When 85% SOC is reached, the 15k ohm resistor is switched out of circuit, ABSORB voltage drops to 27.1 volts, charging current drops substantially, the SCC quickly evaluates the batteries as being fully charged, and the SCC output voltage drops to FLOAT level, now providing current to any load, without further charging the battery bank. By carefully selecting this FLOAT voltage setting, and if the sun is still out, the solar panels continue to satisfy any load, without draining the batteries. The Victron BMV712 can even be programmed to switch the 15k ohm resistor back in circuit if the battery SOC drops by as little as 1%, thereby slowly cycling the SOC between 84% and 85%, if load and sun conditions change through the afternoon. This way, when the sun finally goes down, the batteries are always sitting between 84% and 85% SOC, as long as sun conditions were favorable.

Another big advantage of using this method to terminate SOC is that charging current does not taper off as batteries approach my desired SOC. For example, using the ABSORB voltage, time and tail current method to terminate SOC, I have seen solar energy wasted when charging current starts to taper off at as low as 70% SOC. Bumping the ABSORB voltage up, until exactly the desired SOC is reached, prevents this inefficient tapering of charge current. This increases the efficiency of solar energy harvest under some combinations of load, solar conditions, and battery SOC. In my observation, limiting SOC to 85% allows the batteries to keep charging at the highest rate my system can deliver, right up to 85%. This might still be true at 90% SOC, but I have not tried it.

Yet another advantage is that I also use the RTS input to terminate charging in case of BMS cell or pack high voltage alarm. Because I don’t rely on the BMS directly to interrupt charging in the event of a battery or cell over-voltage condition, I needed another way to shut down charging current. In the event of a BMS over voltage alarm, a different control relay switches a different resistor, this one a 1.5k ohm value resistor, across the RTS input terminals. This fools the charge controller into thinking that the batteries are very hot, and charge voltage drops to zero, without the potential harm that might occur if the charge controllers are just turned OFF while solar input is connected. Or a slightly different resistor value can be selected to reduce charge voltage to some other low voltage.

I hope my long explanation is clear, and that it might be useful to other forum users interested in limiting the SOC of their lithium batteries to a maximum of something less than 100%.

This modification is just a bit complex to explain, so this is a rather long post.

I have a DIY 42 kWH lithium battery bank that is working wonderfully well for us. It is a lot nicer to rely on than was our old AGM lead-acid bank.

I know that some forum users here consider it “Pollyanna-ish” to want to limit the state of charge of our lithium batteries to something like between 15% to 85% of full capacity. But going the DIY-route, building a large lithium pack from low-cost 280-AH cells direct from China, allowed me to install much “extra” battery capacity, which means not needing to always draw 100% out of the batteries. Yet, I still have the extra capacity available if a long cloudy period is expected. So, I figure, if it might get us an extra two or three years use out of these batteries, why not limit the stress on cells?

The problem is, with my Outback FlexMax charge controllers, how do you reliably control charging to terminate at something like 85% of full charge? Like Victron, and Schneider, and others, the Outback FM80’s are designed to terminate charge based upon some combination of time in ABSORB mode, or decreasing tail current (which Outback calls Charged Return Amps), or a combination of measured battery voltage, time in ABSORB, and declining charge current.

As an aside, it happens that I don’t ever directly rely on my BMS to interrupt charge or discharge, and I don’t pass charging current or discharge current through my BMS. I could not bear the aesthetics of inserting these “little” BMS boards (even the ones rated at 120 amps) in line with my carefully chosen, lovely and expensive, large-gauge welding cables that connect my battery bank. Instead, I use the alarm outputs of my much-less-expensive 30-amp JBD BMS’s, via control relays, to stop charging or discharging as appropriate, if BMS alarm conditions are ever tripped.

One often mentioned way that these charge controllers can be setup to charge lithium, to something less than 100% charge, is to carefully select a lower ABSORB mode voltage, and pick a tail current that terminates charge in the neighborhood of 85%. Then you set a “pseudo-FLOAT” voltage that keeps the SCC supplying current to satisfy your load, while allowing some small current to flow in or out if the battery bank. Of course, we don’t normally FLOAT lithium batteries, but we can use this slightly lower “FLOAT” stage voltage setting to keep the SCC working to satisfy your load without further charging the battery bank.

After weeks and weeks of trying to find ABSORB Voltage/Time/Tail Current/FLOAT voltage settings to optimize charging to about 85% of full charge, I found that to be an exercise in frustration. It was easy to find a combination of settings that would give good results when the batteries were being charged with no load on the system. And it was easy to find a different combination of settings that would give good charging results, while at the same time drawing a moderate load on the system. And it was easy to find a still different combination of settings that would give results if charging the batteries while drawing a heavy load from the system. But it was impossible, at least for me, to find a single combination of settings that gave good results under the full range of sun and load conditions. Since I have to travel for work for two weeks at a time, I needed a wife-proof scheme that would allow her to operate the system with minimal intervention.

The modification that I’m now using is possible because these charge controllers have an external temperature sensor input, intended to adjust lead-acid battery charging voltages, depending on battery temperature. Because we don’t use temperature compensation for lithium batteries, this temperature sensor input is available to utilize in a different way.

The temperature sensor, which the manufacturers call either an RTS (Remote Temperature Sensor) or an RTD (Remote Temperature Detector) are simply metallic elements whose electrical resistance changes with temperature. For the Outback SCC, both the ABSORB stage, and FLOAT stage voltages are calibrated for room temperature. But if an RTS is connected to the system, then for every degree centigrade lower/higher than room temperature that is detected by the RTS, the stage voltages are increased/decreased by .06 volts (for a 24-volt system) up to a user-programmable limit.

But instead of using the actual RTS, a fixed-value resistor can be switched in circuit, across the RTS input terminals by a relay, to fool the solar charge controller into temporarily increasing or decreasing charge stage voltage. By experimentation, I found that switching a 15k ohm resistor across the RTS input terminals, I can increase the stage charge voltage by my pre-programmed 0.4 volts limit.

For utilizing this feature to help control charging of a lithium battery, I use it in conjunction with a coulomb-counting SOC meter having a programmable relay output. The SOC meter that I chose is the Victron BMV712, which I’ve found to be quite accurate, needing recalibration very infrequently once Peukert exponent, and Battery Charge Efficiency factors, were optimized by trial and error. My Victron BMV712 relay output is wired so that relay contacts are in series with both a 15k ohm resistor, and the RTS input terminals. The Victron relay contacts are programmed to open when my desired upper-limit SOC is reached.

For example, my ABSORB stage voltage is set in the FM80 to 27.1 volts. FLOAT stage voltage is set to 26.8 volts. Below my desired SOC charge termination setting, the 15k ohm resistor input tells the FM80 to increase ABSORB and FLOAT voltages to 27.5 and 27.2 volts respectively. When 85% SOC is reached, the 15k ohm resistor is switched out of circuit, ABSORB voltage drops to 27.1 volts, charging current drops substantially, the SCC quickly evaluates the batteries as being fully charged, and the SCC output voltage drops to FLOAT level, now providing current to any load, without further charging the battery bank. By carefully selecting this FLOAT voltage setting, and if the sun is still out, the solar panels continue to satisfy any load, without draining the batteries. The Victron BMV712 can even be programmed to switch the 15k ohm resistor back in circuit if the battery SOC drops by as little as 1%, thereby slowly cycling the SOC between 84% and 85%, if load and sun conditions change through the afternoon. This way, when the sun finally goes down, the batteries are always sitting between 84% and 85% SOC, as long as sun conditions were favorable.

Another big advantage of using this method to terminate SOC is that charging current does not taper off as batteries approach my desired SOC. For example, using the ABSORB voltage, time and tail current method to terminate SOC, I have seen solar energy wasted when charging current starts to taper off at as low as 70% SOC. Bumping the ABSORB voltage up, until exactly the desired SOC is reached, prevents this inefficient tapering of charge current. This increases the efficiency of solar energy harvest under some combinations of load, solar conditions, and battery SOC. In my observation, limiting SOC to 85% allows the batteries to keep charging at the highest rate my system can deliver, right up to 85%. This might still be true at 90% SOC, but I have not tried it.

Yet another advantage is that I also use the RTS input to terminate charging in case of BMS cell or pack high voltage alarm. Because I don’t rely on the BMS directly to interrupt charging in the event of a battery or cell over-voltage condition, I needed another way to shut down charging current. In the event of a BMS over voltage alarm, a different control relay switches a different resistor, this one a 1.5k ohm value resistor, across the RTS input terminals. This fools the charge controller into thinking that the batteries are very hot, and charge voltage drops to zero, without the potential harm that might occur if the charge controllers are just turned OFF while solar input is connected. Or a slightly different resistor value can be selected to reduce charge voltage to some other low voltage.

I hope my long explanation is clear, and that it might be useful to other forum users interested in limiting the SOC of their lithium batteries to a maximum of something less than 100%.

Last edited: