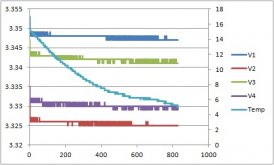

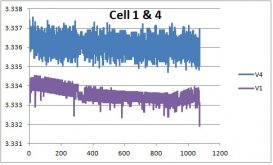

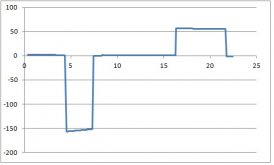

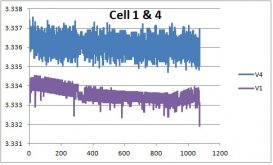

I'm about done with this design. Time to move on to new and improved. But first, I document some results. I have a 4-cell battery. Each cell has its own resistive voltage divider refenced to ground to enable a 2V referenced adc to safely measure the voltage. Measured voltages are (about): 3.3V, 6.6V, 9.9V and 13.2V. Cell 1 voltage is a direct measurement. But to get (for example) Cell 4 voltage, the 9.9V measurement must be subtracted from the 13.2V measurement. Due to the subtraction, Cell 4 ripple and measurement errors are twice as large as Cell 1.

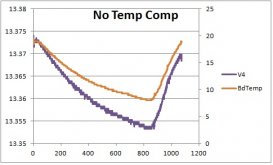

Neglecting the few spikes, Cell 1 ripple is about 0.7 mV. Cell 4 has perhaps 2 mV ripple.

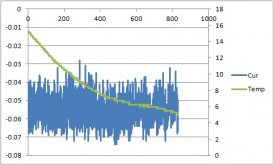

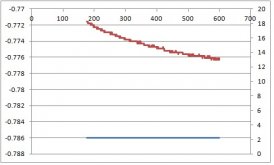

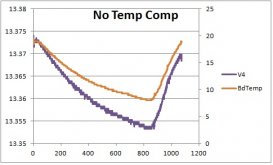

The circuit also has a huge thermal drift problem. Temperature in this plot goes from a maximum of 19C down to 8C. The measured voltage has a thermal drift of 20 mV. Not sure, I believe the internal to the adc voltage reference is the problem.

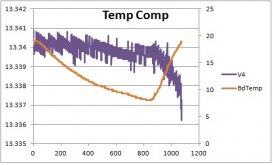

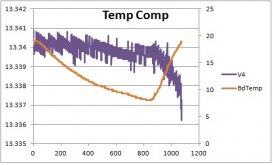

Much of the thermal error can be compensated. Not sure why the voltage is dropping beginning at 900 minute mark. There's some ripple aliasing, perhaps caused by ADC1115 I2C communication?

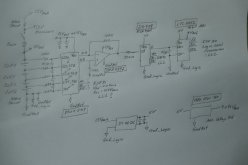

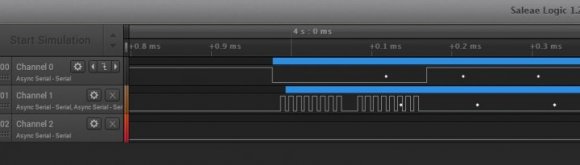

This is the entire circuit in operation. The BMS is capable of disabling loads or charging systems, monitor cell voltages, monitor vehicle chassis battery voltage, charge chassis battery (under specific conditions), measure shunt current, coulomb counter, measure cell and external temperatures, data log to SD memory, and send data via WiFi to my iPad. Looks like I need a packaging guru. Ha. Got to get some credit for matching the blue paper tape (which serves as wire-to-connector strain relief) with the blue 180AH CALB cells.

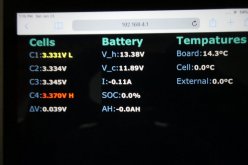

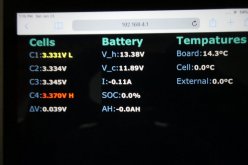

iPad data. V_h is the house battery while V_c is the chassis (starting) battery. All cell are at 3.333V. Cell 4 shows the largest error (3.370V). Time to move on to a new design.