paulca

New Member

- Joined

- Sep 13, 2022

- Messages

- 77

Hi Guys, Fairly new to lithium, I've built an AC side microcontroller based charge controller for lead acid, been running it for 2 years (lockdown drove me crazy , had to invent something)

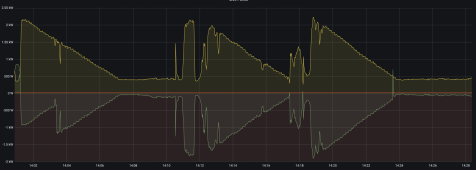

Now I've bought a 4.8kwh lithium battery and factory charge controller, However , after monitoring the response times of the algorithm used by the controller it responds to change like a snail on tranquillisers. Whether discharging or charging it seems to change the power delivered at a rate of ~350W per MINUTE.

Massively inefficient when clouds are flying by every minute, PV power goes from 3kw to 500w in four seconds, But charge power is still at 3kw.

Most commercial systems seem to report stats at 5min intervals , so it hides all this inefficiency.

Now my DIY system would do a 'sort of' binary search to find the correct power quickly, if a cloud came over for 10 seconds, it'd react accordingly and within a second the charger power would be enough to keep the grid usage between -100W and 0W.

So my question is.... Is the factory unit deliberately designed that way to avoid rapid changes to current in/out of the battery because lithium likes CC, or, is it just a simple poor linear algorithm that they came up with.

If I plugged my system into it , it reacts much faster, I'd waste less energy in the 'cloud transitions' but would I really wreck the batteries by changing the charge current at the same rate the solar grows and falls when clouds pass? (which isn't really that high a frequency change)

Please don't just chime in saying CC means constant current yada yada , I know all that , but these factory systems vary the charge into the battery based on power available from PV , so it's not CC , it's very very slowly changing current from what I've observed. Is it deliberate? or just a bad algorithm ? Is there a 'recommended acceptable change of current per second' ?

Now I've bought a 4.8kwh lithium battery and factory charge controller, However , after monitoring the response times of the algorithm used by the controller it responds to change like a snail on tranquillisers. Whether discharging or charging it seems to change the power delivered at a rate of ~350W per MINUTE.

Massively inefficient when clouds are flying by every minute, PV power goes from 3kw to 500w in four seconds, But charge power is still at 3kw.

Most commercial systems seem to report stats at 5min intervals , so it hides all this inefficiency.

Now my DIY system would do a 'sort of' binary search to find the correct power quickly, if a cloud came over for 10 seconds, it'd react accordingly and within a second the charger power would be enough to keep the grid usage between -100W and 0W.

So my question is.... Is the factory unit deliberately designed that way to avoid rapid changes to current in/out of the battery because lithium likes CC, or, is it just a simple poor linear algorithm that they came up with.

If I plugged my system into it , it reacts much faster, I'd waste less energy in the 'cloud transitions' but would I really wreck the batteries by changing the charge current at the same rate the solar grows and falls when clouds pass? (which isn't really that high a frequency change)

Please don't just chime in saying CC means constant current yada yada , I know all that , but these factory systems vary the charge into the battery based on power available from PV , so it's not CC , it's very very slowly changing current from what I've observed. Is it deliberate? or just a bad algorithm ? Is there a 'recommended acceptable change of current per second' ?