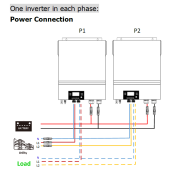

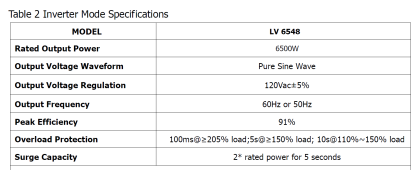

Hey y'all, I live in Texas, which means that in August, we're drawing a lot of power around 3 PM to power the AC. According to my electric bill, between 3 - 4 PM on the worst day last year, I used 9.96 kWH of electricity. Divide by one hour, and that gives me an average wattage during that hour of 9.96kW. Divide that by 240 volts = 41.5 Amps of current. Even accounting for surge, at double that, say 80A, that's just over only half of what my panel, 3/0 underground wire, and breaker at my meter are rated for, 150A. My home was built in 1980 ... and by the looks of it none of grid-to-house circuitry has been touched since. I'm putting in an off-grid/hybrid system (NOT feeding the grid, but connected to it for backup), my inverters cannot be near my main panel, and I'll be bypassing from the main 3/0 grid input line to an on-off-on switch to the inverters, then back to the main panel (so that I can avoid rewiring my entire house). I'm trying to size the wire run from the switch to my inverters, and that 41.5 Amps seem awful low to me. Just looking for a sanity check on my math ... thanks in advance!!

What am I missing? Current to house is on worst day of summer is only 37.5A?

- Thread starter scottvanv

- Start date