In the solar industry, Clipping is a term used to describe lost

power when an inverter cannot invert all the power that is

available to it, it must throw away the excess energy. The

typical ratio used in the industry is 1.2 as it represents

very minimal clipping.

Why not use an inverter than can capture all of it?

Economics mainly, panels only output their maximum

rated wattage at solor noon and for a couple of weeks

when they're perfectly aligned, so why pay for more

inverter than you'll typically use? Let's use a 400 W

panel with a 350W microinverter as an example. |  |

The 400W panel is rated at STC. So, if your conditions commonly exceed STC (avg panel temperature less than 77°F , higher than sea level, low air-mass, etc.) then your panels

might commonly be producing more than 400W in the

peak of the day.

But, it's not likely as panels get hot in the sun and you only have maximum radiance for a short period. For example, if

the inverter is clipping at 350W that's 350/400, or clipping occurs at > 87.5% radiance.

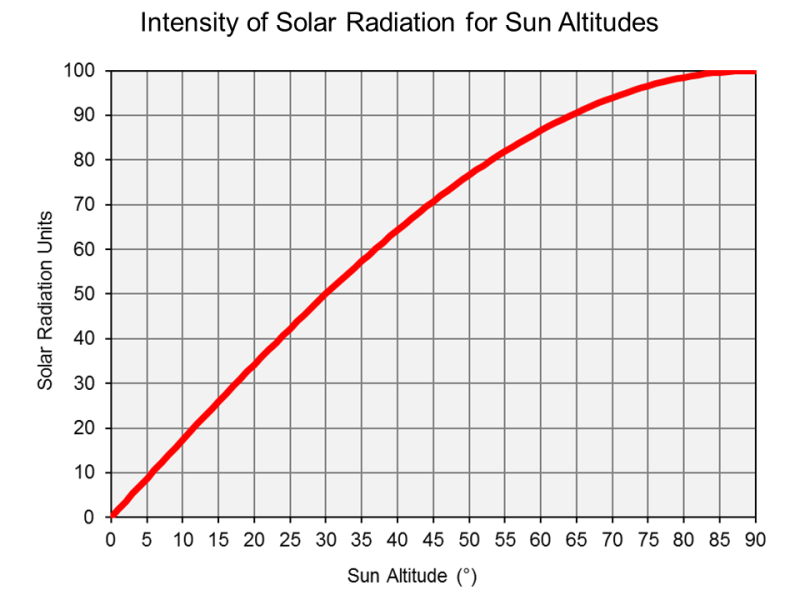

The chart to the right is what the sun puts out, what you might see

if you had a two-axis tracker. As an array will most likely be fixed,

an actual curve would be much narrower. But it will give us the

absolute maximum clipping might cost you. 87.5 is around a sun

elevation of 65, so ~65 to 90 and 90 to 65, so that's 2x(90-65) = 50

degree span. 1 hour is 15 degrees, so 50/15 = 3.3 hrs you might be

clipping per day.

So, in a perfectly aligned 2-axis system on a perfect day you might

lose up to 50W/hr for 3.3 hours. You need to calculate the area

under the curve, we'll say it's .6. So,

(400W - 350W) x 3.3 hrs x .6 area = ~100 Wh/d/panel. On a

fixed panel system you'd see a lot less, typically only over the

few weeks of the year the tilt was perpendicular to the sun. |

|

You can use

SAM to give you a more realistic amount of clipping for your panel orientation, location and weather. Typically it's a lot less than you might think.