I'm aware that going over the PV input voltage limit for inverters is a no-no, but it seems strange to me that we have to size arrays for the max possible input (low temperatures, blue sky, bright sun) when in reality, especially in the UK, panels are at suboptimal angles, not directly facing the sun, it's warmer than the minimum, and it's often hazy or cloudy, ands we're running at much less than this theoretical capacity.

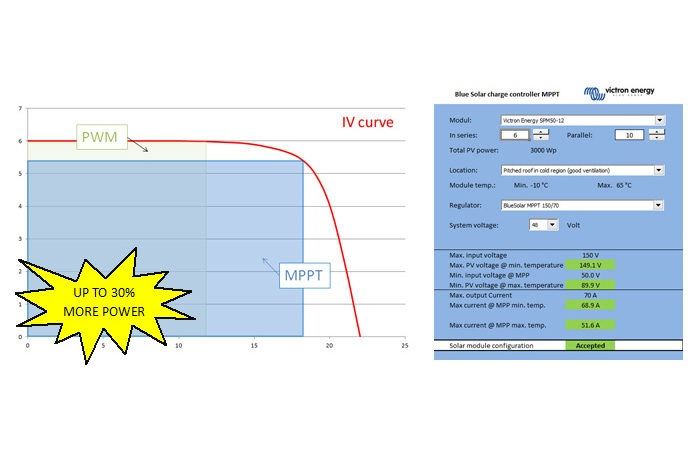

In other scenarios one would size for more common conditions, and lose the excess when it happened on the odd occasion, but not with solar. Is there no device that exists to limit PV voltage on those rare but most powerful of generating days, allowing me to run more panels and my inverter at max for more of the rest of the time? E.g. if at the moment I size my array to run at just under the max input, then for most of the year I'm probably generating at about 50% of this max. Whereas if I could limit the voltage, I could size the panel array so that I was at 80-90% max, say, for most of the time. Since this seems sensible, why hasn't someone invented something like this?

In other scenarios one would size for more common conditions, and lose the excess when it happened on the odd occasion, but not with solar. Is there no device that exists to limit PV voltage on those rare but most powerful of generating days, allowing me to run more panels and my inverter at max for more of the rest of the time? E.g. if at the moment I size my array to run at just under the max input, then for most of the year I'm probably generating at about 50% of this max. Whereas if I could limit the voltage, I could size the panel array so that I was at 80-90% max, say, for most of the time. Since this seems sensible, why hasn't someone invented something like this?