Visionquest

New Member

I'm close to completing design research for my 12v DIY battery. In this thread I will summarize my analysis of the heater wattage needed based on my worst-case use case of off-grid winter RV storage. I need that batteries to stay active enough to run a remote monitor system.

My Requirement - down to a 0 degree F ambient temperature, the solar system will heat the batteries (to at least 32 degrees) in order for the BMS to successfully start charging. I will actually design for a 40 degree heating cutoff.

Parameters/Design assumptions -

1. I will use 2" insulation board on all sides of the battery. That provides an R10 level of insulation. Using rough dimensions for my box yields around 5 sq-ft of surface area. Using the calculator here (https://calculator.academy/heat-loss-r-value-calculator/), means I'll need heating of 20 btu's to maintain a 40 degree differential. That is 21,100 joules, which can be converted to roughly 6 watts. Sounds good, right? But wait,......

2. The above calculation is just to maintain a temperature difference. The other issue is how to bring the inside of the box (i.e. the cells) up from 0 degrees to 32 degrees. (that is the worst case scenario) The EVE 304 datasheet states the Heat Capacity of a single cell to be 0.9-1.2 Kj / Kg-K (kilojoules per kilogram-degree kelvin). Using those figures I calculated that raising a single cell by 10degrees F requires 123 kilojoules. Since 1 joule = 1 watt-second, I divide that number by 3600 seconds per hour to get 34 hours of heating with 1 watt. Of course I'm going to want to heat up the cells in order for the solar day to have time to actually charge the batteries, and there are 4 cells. So the time would become 435 hours at 1w, 43.5 hours at 10w, 4.35 hours at 100w, or 2.2 hours at 200w. (to raise all cells 32 degrees)

3. I don't really want to use that much heating and there is another factor to consider. The very thing that causes the problem (heat capacity of the cells) also gives the solution. Once heated, the cells contain roughly 500 Kjoules of energy for every 10 degree F of temperature. But the box they are in only requires around 21 Kjoules per hour to maintain a 40 degree rise from ambient. Using a simple first approximation says it will take almost 24 hours for the battery to self-cool from 40 to 30 degrees.

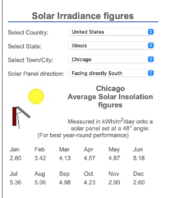

4. Using all of these numbers, a daily solar heating requirement can be determined. Assume 6 hours average of solar charging available. 18 hours without heat can lose 380 Kjoules of energy. In order to replenish that in 6 hours of solar charging will require 64,000 joules of heating which can be done with 18watts of heat pad.

5. Since there could be several days of heavy clouds I plan to go with somewhere between 60 and 100 watts of heat pad power which I'm thinking is enough on average to keep things warm enough.

6. I've decided NOT to use a BMS with integrated heat control. Instead, I will use a combination of charging voltage and temperature to enable heating. My Thornwave battery monitor has a voltage controlled relay driver already. I will set this to activate whenever there is more than 13.6v present. That will feed to the relay on this temperature controller (https://www.amazon.com/LM-YN-Thermostat-Fahrenheit-Temperature/dp/B076Y5BXD9) to then power the heat pads. I will easily be able to modify the trigger voltage and temperature set points.

7. I will apply heat pads to aluminum sheet in three places - placed on the bottom and both long sides of the cell stack.

Comments and critique of this analysis is encouraged. I think my reasoning and calculations are sound but I may have missed something entirely.

Thanks!

Bob

My Requirement - down to a 0 degree F ambient temperature, the solar system will heat the batteries (to at least 32 degrees) in order for the BMS to successfully start charging. I will actually design for a 40 degree heating cutoff.

Parameters/Design assumptions -

1. I will use 2" insulation board on all sides of the battery. That provides an R10 level of insulation. Using rough dimensions for my box yields around 5 sq-ft of surface area. Using the calculator here (https://calculator.academy/heat-loss-r-value-calculator/), means I'll need heating of 20 btu's to maintain a 40 degree differential. That is 21,100 joules, which can be converted to roughly 6 watts. Sounds good, right? But wait,......

2. The above calculation is just to maintain a temperature difference. The other issue is how to bring the inside of the box (i.e. the cells) up from 0 degrees to 32 degrees. (that is the worst case scenario) The EVE 304 datasheet states the Heat Capacity of a single cell to be 0.9-1.2 Kj / Kg-K (kilojoules per kilogram-degree kelvin). Using those figures I calculated that raising a single cell by 10degrees F requires 123 kilojoules. Since 1 joule = 1 watt-second, I divide that number by 3600 seconds per hour to get 34 hours of heating with 1 watt. Of course I'm going to want to heat up the cells in order for the solar day to have time to actually charge the batteries, and there are 4 cells. So the time would become 435 hours at 1w, 43.5 hours at 10w, 4.35 hours at 100w, or 2.2 hours at 200w. (to raise all cells 32 degrees)

3. I don't really want to use that much heating and there is another factor to consider. The very thing that causes the problem (heat capacity of the cells) also gives the solution. Once heated, the cells contain roughly 500 Kjoules of energy for every 10 degree F of temperature. But the box they are in only requires around 21 Kjoules per hour to maintain a 40 degree rise from ambient. Using a simple first approximation says it will take almost 24 hours for the battery to self-cool from 40 to 30 degrees.

4. Using all of these numbers, a daily solar heating requirement can be determined. Assume 6 hours average of solar charging available. 18 hours without heat can lose 380 Kjoules of energy. In order to replenish that in 6 hours of solar charging will require 64,000 joules of heating which can be done with 18watts of heat pad.

5. Since there could be several days of heavy clouds I plan to go with somewhere between 60 and 100 watts of heat pad power which I'm thinking is enough on average to keep things warm enough.

6. I've decided NOT to use a BMS with integrated heat control. Instead, I will use a combination of charging voltage and temperature to enable heating. My Thornwave battery monitor has a voltage controlled relay driver already. I will set this to activate whenever there is more than 13.6v present. That will feed to the relay on this temperature controller (https://www.amazon.com/LM-YN-Thermostat-Fahrenheit-Temperature/dp/B076Y5BXD9) to then power the heat pads. I will easily be able to modify the trigger voltage and temperature set points.

7. I will apply heat pads to aluminum sheet in three places - placed on the bottom and both long sides of the cell stack.

Comments and critique of this analysis is encouraged. I think my reasoning and calculations are sound but I may have missed something entirely.

Thanks!

Bob