@Sleeper85 ,

@MrPablo : Somewhat unrelated (and maybe obvious to everybody except me ...)

I tried switching a Test HomeAssistant Instance (basically an empty one where I just setup some MQTT entities logging) for test Purposes.

I converted the SQLite3 Database from this Test HomeAssistant Instance ({DATA_FOLDER}/home-assistant_v2.db) to PostgreSQL then finally to TimescaleDB-HA (PostgreSQL + TimescaleDB Extension + PostGIS Extension + TimescaleDB Toolkit) to see if that would Improve the Performances. This is on a KVM Virtual Machine with ZFS Pool/Dataset inside the Virtual Machine (AMD64 Host with Proxmox VE with ZFS Pool/Dataset also) running with ZFS and Compression set to LZ4.

The Migration was performed using a Script I developed. I lost basically 2 days dealing with ramdom Podman (Docker) Network Hostnames & DNS Failures that occurred on a random Basis due to some Network Configuration that for some Reason was dangling around in my temporary User Folder

.

Anyways here it is in case anybody is interested:

Script Configuration to Migrate a [SQLite3] Database to [PostgreSQL] - luckylinux/migrate-sql-database

github.com

The Script will run in Podman/Docker directly on the Host where your Docker Container Database is. The Advantage is that will NOT require any Authentication & Data Transfer over UNPROTECTED Network Connection (by default PostgreSQL is NOT using SSL, at least in PostgreSQL Docker Containers). The communication can therefore be done directly "across Containers", with no need to send data over unencrypted connections.

The Script takes care of:

- Migrate the SQLite3 Database (home-assistant_v2.db) to a (temporary) PostgreSQL using pgloader

- Fix Sequences on (temporary) PostgreSQL database using psql (otherwise you'll get lots of errors when HomeAssistant tries to write to the Database, complaining about non-Unique Keys, since the Sequences were NOT updated automatically)

- Backup the (temporary) PostgreSQL Database using pg_dump

- Initialize the (production) TimescaleDB-HA Database according to the Instructions on the TimescaleDB Website

- Import/Migrate (Restore) the (temporary) PostgreSQL Database into the (production) TimescaleDB-HA Database

- Finalize the (production) TimescaleDB-HA Database according to the Instructions on the TimescaleDB Website

Of course there is a bit of Configuration Required and you need to bring down your HomeAssistant Container before starting the actual migration (and prevent it from Starting again until the Migration is finished). On the other side you need to start the (temporary) Postgresql Database and the (production) TimescaleDB-HA Database before starting the Migration.

I plan to test it a bit better by copying the "Production" home-assistant_v2.db File to a Test Virtual Machine and try to do the Conversion with that. That will most likely spot some new Issues, since importing 6GB of Data will probably be a bit more challenging (RAM, CPU, time) than 2MB of an almost empty Database.

If Somebody is Interested in the ZFS (or Other File System) Compression Data Information:

Bash:

#!/bin/bash

# Define Folder / File

target=${1-""}

if [[ -z "$target" ]]

then

read -p "Enter the Target File or Folder to Analyze: " target

fi

# Check if File or Folder or if doesn't Exist

if [[ -d "${target}" ]]

then

echo "Analyzing Folder ${target}"

elif [[ -f "${target}" ]]

then

echo "Analyzing File ${target}"

else

echo "Target ${target} does NOT Exist. Aborting !"

exit 9

fi

# Declare Function

get_size() {

# Target File / Folder

local ltarget="${1}"

# The Arguments for "du" Command is passed by nameref

declare -n largs="$2" # Reference to output array

# Run Command and Filter Output

#local lresult=$(du ${largs[*]} "${ltarget}" | sed -E "s|^([a-zA-Z0-9_-]+)\s*?(.*)$|\1|g") # Value + Unit In one Block (e.g. "256M")

#local lresult=$(du ${largs[*]} "${ltarget}" | sed -E "s|^([0-9]+)([a-zA-Z]*?)\s*?(.*)$|\1 \2|g") # Value + Unit with Space Between them (e.g. "256 M")

local lresult=$(du ${largs[*]} "${ltarget}" | sed -E "s|^([0-9]+)(\.?)([0-9]*?)([a-zA-Z]*?)\s*?(.*)$|\1\2\3 \4|g") # Support for e.g. "5.6G" Integer Value . Decimal Value + Unit with Space Between them (e.g. "256 M")

# Return Result

echo $lresult

}

# Get Uncompressed Size

uncompressedOptionsRaw=("--summarize" "--apparent-size")

uncompressedOptionsHuman=("--summarize" "--apparent-size" "--human-readable")

uncompressedSizeRaw=$(get_size "${target}" uncompressedOptionsRaw)

uncompressedSizeHuman=$(get_size "${target}" uncompressedOptionsHuman)

# Get Compressed Size

compressedOptionsRaw=("--summarize")

compressedOptionsHuman=("--summarize" "--human-readable")

compressedSizeRaw=$(get_size "${target}" compressedOptionsRaw)

compressedSizeHuman=$(get_size "${target}" compressedOptionsHuman)

# Compute Compression Ratio

compressedRatioFormatted=$(awk -v u=${uncompressedSizeRaw} -v c=${compressedSizeRaw} 'BEGIN{printf("%.1f\n", c/u*100.0)}')

# Echo

echo "Uncompressed Size: ${uncompressedSizeHuman}B (${uncompressedSizeRaw} B)"

echo "Compressed Size: ${compressedSizeHuman}B (${compressedSizeRaw} B)"

echo "Compression Ratio: ${compressedRatioFormatted} %"

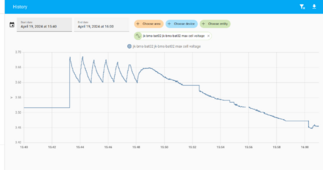

After approx. 12 hours of data Logging from a SINGLE JK BMS I get, for TimescaleDB-HA 16.2 with PostgreSQL 16.2 + PostGIS + TimescaleDB Toolkit under the Hood (which should be very high performance):

Bash:

root@TESTING:/tools# ./getSizeCompressionData.sh "/home/podman/containers/data/homeassistant-timescaledb/"

Analyzing Folder /home/podman/containers/data/homeassistant-timescaledb/

Uncompressed Size: 593 MB (606562 B)

Compressed Size: 250 MB (255859 B)

Compression Ratio: 42.2 %

My Production HomeAssistant Instance running in the Garage and Handling Solar (currently logging 2 x JK BMS + 3 x Inverters + 1 x Meteo Station) still running only the "default" SQLite3 Database home-assistant_v2.db but also on ZFS with LZ4 Compression:

Bash:

root@SOLAR:/tools# ./getSizeCompressionData.sh "/home/podman/data/homeassistant/"

Analyzing Folder /home/podman/data/homeassistant01/

Uncompressed Size: 5.7 GB (5894054 B)

Compressed Size: 3.2 GB (3293903 B)

Compression Ratio: 55.9 %

So I assume that the big File Size (approx. 0.5 GB / 12h / 1 BMS = 1GB / day / BMS) is due to the Update Frequency of the JK BMS (every 1 second, so that the controller for Automatic Charge Voltage / Automatic Charge Current can work Correctly).

But 1GB / BMS / day is A LOT of data without much use IMHO. I am also looking into using HomeAssistant "LTSS" addon to replace the Recorder Section (overall that will make HomeAssistant use 2 databases: 1 for LTSS [Long Term Data Storage] where ALL Data will be periodically dumped to and 1 for Normal Operation [only Store Data for a few Days])].

It seems like Home Assistant Recorder Purges Data older than 10 days by Default ...

Instructions on how to configure the data recorder for Home Assistant.

www.home-assistant.io

But ... if the Value does NOT Change, I do not see why that value should be re-inserted into the Database.

I didn't see any "Global" HomeAssistant Configuration for the "recorder:" Section that can limit the Sample Rate / Frequency of Sensors. I saw some Posts suggesting the Definition of "Virtual" Sensors.

One Option is maybe to Specify a "delta" Filter for each JK BMS Sensor based on:

Instructions for setting up sensor components in ESPHome.

esphome.io

E.g. for a Cell Voltage specify Maybe "delta: 0.002" [V] so that any "Measurement Noise / Tolerances" of 2mV or so will be Skipped.

Or do the RAW Sensor Value as "Internal" and only Publish the Filtered Sensor Value to API/MQTT ?

Any other Ideas ?

EDIT 1: on second thought, maybe "delta" is not very good. If every "step" is less than 0.002 V (as in my example) it will NEVER log anything

. Worst it will NEVER use that for the actual controller.

EDIT 2:

Maybe we therefore need to split EACH sensor into:

- ${sensorName} (as it is now but with internal: true)

- ${sensorName}_publish (as it is now but with internal: false and throttle: ${maximumUpdateFrequency}s

Any other ideas ?

EDIT 3:

*In theory* for a **text file** ZFS should be able to handle the compression of similar Data quite well.

But Home Assistant SQLite3 Database is **not** a text file, but it's more like a binary File. So I'm not so sure how effective ZFS Compression is in this case (I'm pretty sure that it also depends on Block Size, Ashift Value). After all ZFS Compression is NOT the same as ZFS De-Duplication Feature (the latter being VERY Memory Intensive and therefore VERY rarely used).

TimescaleDB .. Not Sure.

This is what can be found on the Internet quite Easily regarding PostgreSQL Tuning on ZFS:

And this very extensive Analysis which, among other stuff, suggests using recordsize=8K or recordsize=16K:

Looking to run Postgres on ZFS? I've gathered some of the information and sage advice out there to give you a head start on figuring out how to do it safely and efficiently.

vadosware.io

Apparently I'm using the "Defaults" of recordsize=128K

.

The "official" OpenZFS Documentation suggests recordsize=32K/64K/128K:

openzfs.github.io

)