I'm building my own off-grid LiFePO4 pack (finally) and wondering what the options are for following the specs about max charging rate at lower temperatures.

The planned pack is 280 Ah cells in 16s, so ~13 kWh. And I have ~12 kWp of PV input (cheap second-hand panels).. trying to get enough energy even on low illumination days, which we're having a lot of recently in NSW, Australia.

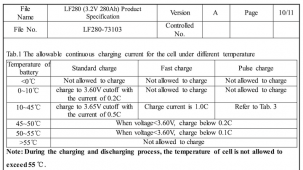

The data sheet for the EVE cells linked by the vendor I'm looking at (File no LF280-73103) has a table of allowable charging rates vs temperature and SoC. I'm not worried about cutting off charging at 0°C because it doesn't get that cold, but it does get under 10°C a lot. On a cold sunny morning, when the sun gets over the hill and hits the panels, they might be producing near their peak. But that's like 1C which the cells can't take until over 10°C... according to the cell specs I should only charge at 0.2C at that temperature... unless it's a pulse less than 30 seconds, when 1C is okay as long as SoC is under 70%.

Reading a lot on these forums it seems most people manage this by having enough battery capacity to never worry about getting over 0.2C, let alone 1C. My budget doesn't stretch that far (it'd be great if it did!)

Is there a BMS that can do this? It doesn't seem impossible to me to make a BMS that could act like a PWM charger to limit the charge current into the battery (though it might play havoc with the SCC's algorithm). However it seems the consensus on these forums is that BMSes are only there like insurance is there — important to have, but intended to never be used.

So should I be looking for an SCC that can do this? An external temperature sensor doesn't seem like a big ask. However the extra problem here is I have 3 arrays of different panels, pointed in different directions, so I'll need 3 SCCs (or a single one with 3 MPPT inputs if that exists). How would the multiple SCCs know how much current the others are producing to avoid going over the total? And they certainly won't have an accurate idea of the SoC.

Any ideas would be most welcome, thank you.

The planned pack is 280 Ah cells in 16s, so ~13 kWh. And I have ~12 kWp of PV input (cheap second-hand panels).. trying to get enough energy even on low illumination days, which we're having a lot of recently in NSW, Australia.

The data sheet for the EVE cells linked by the vendor I'm looking at (File no LF280-73103) has a table of allowable charging rates vs temperature and SoC. I'm not worried about cutting off charging at 0°C because it doesn't get that cold, but it does get under 10°C a lot. On a cold sunny morning, when the sun gets over the hill and hits the panels, they might be producing near their peak. But that's like 1C which the cells can't take until over 10°C... according to the cell specs I should only charge at 0.2C at that temperature... unless it's a pulse less than 30 seconds, when 1C is okay as long as SoC is under 70%.

Reading a lot on these forums it seems most people manage this by having enough battery capacity to never worry about getting over 0.2C, let alone 1C. My budget doesn't stretch that far (it'd be great if it did!)

Is there a BMS that can do this? It doesn't seem impossible to me to make a BMS that could act like a PWM charger to limit the charge current into the battery (though it might play havoc with the SCC's algorithm). However it seems the consensus on these forums is that BMSes are only there like insurance is there — important to have, but intended to never be used.

So should I be looking for an SCC that can do this? An external temperature sensor doesn't seem like a big ask. However the extra problem here is I have 3 arrays of different panels, pointed in different directions, so I'll need 3 SCCs (or a single one with 3 MPPT inputs if that exists). How would the multiple SCCs know how much current the others are producing to avoid going over the total? And they certainly won't have an accurate idea of the SoC.

Any ideas would be most welcome, thank you.