Small defect rate and can't find the problem with sample testing.

Melamine contaminated gluten in pet food was near 100%, no problem reproducing the issue.

Dogs killed by pet jerky was small fraction, so when FDA tested 30 samples from store shelves, they declared the problem was not ethylene glycol. They didn't take large enough sample size to have any chance of finding one with lethal concentration. They refused to test any open packages supplied by owners of pets that were killed. The samples they did test, some had zero ethylene glycol and some had varying amounts well below lethal dose - but higher concentration than standards for glycerin (one ingredient) allow. Their published data showed a large standard deviation, and I was able to compute what fraction of batches would have a lethal concentration. It appeared in line with likely nation-wide sales of the product and pet death rates.

Won't (so far) test the battery or inverter Koldsimer has a problem with. Sound similar?

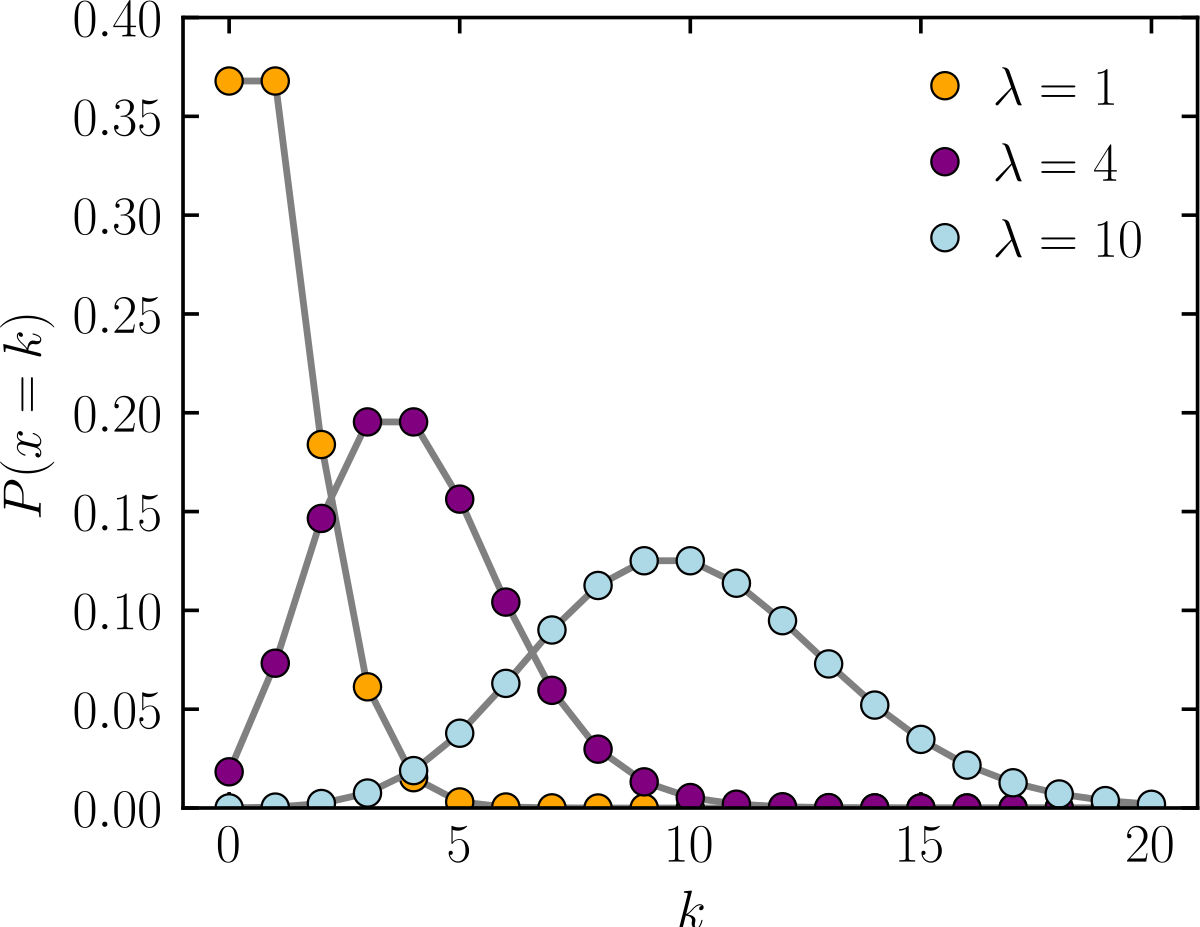

Once you have 3 or more failures, statistics begin to give narrower bands. You can then calculate expected failure rates of other groups, e.g. other models.

Our federal regulatory institutions are now employing people incompetent in statistics, and allowing them (or assigning them?) to creating press releases for the public. It is showing up in false reports regarding vaccine side effects representing background rate, identifying food contaminants, effectiveness of automobile safety devices. Only private companies who care to do the job right protect us. (and forums with consumer comments, useful so long as not stuffed with false data by people having an agenda, as is happening with some current health issues.) A problem with forums and user reviews is we have only numerator (complaints), not denominator (number of units sold). When making buying decisions, we want to know failure rates.