I'm no expert but I'm not an idiot either. I know how to point a solar panel directly at the sun. The test was done around noon.

But still, it looks like you didn't read until this sentence:

"Even then, "full sun" in December" is a bit less than "full sun" in June because the sun is lower in the sky and has to pass through more atmosphere to get to the surface of the earth."

And from Warpspeed's previous post:

"It depends very heavily on where you are, and the local climate."

For example 54 degrees north, "full sun" in December is a LOT less than "full sun" in June because the sun is lower in the sky.

Lower the sun, a lot more dense atmosphere, less energy to your panels even at ideal angle.

You said "...the days are only 10 hrs long". This means you are testing after the solstice and the sun will be low even at noon.

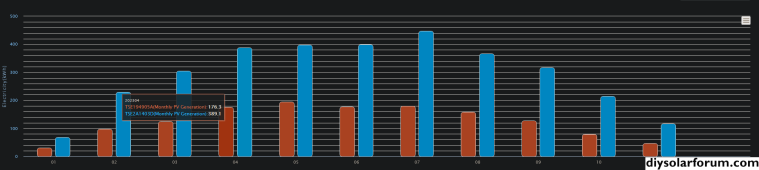

My array: best angle somewhere at solstices, so May and January approximately same "not ideal" angle. But look at difference: ~22 kWh per ideal day in June, 4 kWh in January. Don't know ideal day production in December, because there are no ideal days in December, where I live.

Next thing is clouding. In June almost invisible cloud lowers panels output by say 5%. In December, the same almost invisible cloud turns into a heavy cloud for diagonal rays and solar output drops by say 70%.

Next thing is panel temperature. Under laboratory conditions test is done when panels are at 25'C. In reality, under good sun, panels may work at 40-50'C and output reaches maybe 80-90% rated production. That's why exists NMOT conditions.

On the other hand, in early spring, when it is -3 'C outside, very clear sky and the sun is already high enough, my panels produced 104%. True, it lasted only 5 minutes, then dropped back to 95%.